AI ETHICS

Building Trust in AI Software

I was fascinated by AI’s power to automate complex tasks, solve problems, and even make decisions that typically require human judgment.

But as I dug deeper into the mechanics of AI, one question kept coming to my mind: How do we ensure that AI is doing what it’s supposed to do?

More importantly, how do we ensure everyone affected by AI decisions understands what’s happening behind the scenes? That’s where the principle of transparency comes into play.

Transparency in AI isn’t just about ensuring the technical aspects are visible to a select group of developers or engineers. It’s about ensuring that the processes and decisions made by AI systems can be explained to all stakeholders — whether they’re technical experts, end users, or decision-makers.

AI must not be a “black box” where decisions are made, but no one understands how or why.

This idea of transparency is essential when AI makes decisions that impact people’s lives. Whether deciding who gets a loan, determining the outcome of a legal case, or even influencing hiring decisions, transparency allows stakeholders to evaluate the risks and address any issues.

Full Disclosure: When AI is Making Decisions

One key aspect of transparency is being upfront when AI is involved in decision-making.

Let’s consider a scenario in the hiring process.

Imagine applying for a job, going through an interview, and later finding out that the final decision on whether you were hired was made by an AI system instead of a human.

I often think about this: Wouldn’t it be frustrating if you didn’t know an AI was involved? That’s why it’s so crucial for companies and organizations to disclose when AI systems are being used in decision-making processes.

People have a right to know if an algorithm is influencing the decisions that affect their lives.

It’s not just a matter of ethics — it’s about trust.

Let’s say a company uses an AI system to screen job applicants. Full disclosure would mean informing applicants upfront that an AI tool is part of the selection process, explaining how it works, and outlining what data it considers.

With this transparency, candidates may feel confident in the outcome, especially if rejected without explanation.

Transparency gives people the opportunity to understand and even challenge decisions if needed.

The Purpose Behind the AI System

Another critical element of transparency is ensuring the AI system’s purpose is clear.

Take, for example, a facial recognition system used in security.

How many people understand the full extent of facial recognition’s purpose? Is it merely for security, or is it also used to track individuals for marketing purposes?

Stakeholders should always be aware of the purpose of the AI systems they interact with. For example, suppose a facial recognition system is used at an airport for security purposes. In that case, passengers must know precisely what the system is doing, what kind of data is being collected, and how it’s being used.

Without this clarity, there’s a risk of misuse or mistrust.

One real-world example is when social media platforms use AI to filter content.

If users are unaware that AI systems are screening and categorizing their posts, they might need to understand why specific posts are taken down or flagged. This lack of transparency can create confusion, making people feel their rights are being violated.

Understanding the Data: Bias and Quality Control

Whenever I think about AI transparency, the issue of training data comes to mind.

AI systems are only as good as the data they’re trained on, but often, the data contains biases that reflect historical or social inequalities. The data must be used in training AI to be disclosed and scrutinized to ensure fairness.

Take the example of AI systems used in the legal system.

Imagine an AI tool designed to predict the likelihood of someone reoffending after being released from prison. If the data used to train the AI is biased — perhaps it overrepresents specific communities — it could lead to unfair outcomes.

What if the AI system was unknowingly biased against a specific demographic? These biases could go unchecked without transparency about the training data, perpetuating discrimination.

In my view, transparency in AI isn’t just about disclosing that AI is being used — it’s also about being open about the data and processes behind it. Stakeholders need to know what historical and social biases might exist in the data, what procedures were used to ensure data quality, and how the AI system was maintained and assessed.

Maintaining and Assessing AI Systems

An often overlooked but equally important aspect of AI transparency is how these systems are maintained over time.

Just because an AI model works well today doesn’t mean it will work as expected tomorrow. What if the data changes or the system starts to degrade over time?

I always think of this in the context of healthcare. Imagine an AI system used to assist doctors in diagnosing patients. The system was trained on medical data several years ago, but medical knowledge and treatments have evolved rapidly. The AI could become updated with regular updates and assessments, leading to accurate diagnoses.

Transparency means informing users about how the AI system works now and keeping them updated on how it’s maintained and monitored over time. This ensures that AI systems remain effective and fair.

The Right to Challenge AI Decisions

Finally, AI transparency must include people’s ability to challenge decisions made by AI systems.

This is crucial for building trust.

If someone feels an AI system has unfairly treated them—say, by denying them a loan or flagging them incorrectly by a security system—they should have the right to question and appeal the decision.

I often ask myself, How would I feel if an AI decided for me, and I had no way to contest it? This is where transparency plays a pivotal role.

It’s not enough for people to know an AI system made a decision — they also need to know how to challenge that decision.

Transparency ensures that AI systems are accountable through a human review process or by providing clear channels for appeals.

Moving Forward with Transparent AI

It’s clear that transparency is not a luxury—it’s a necessity.

Without it, AI systems risk becoming tools people don’t trust or understand. AI must be practical and transparent in its processes, decisions, and data usage to succeed.

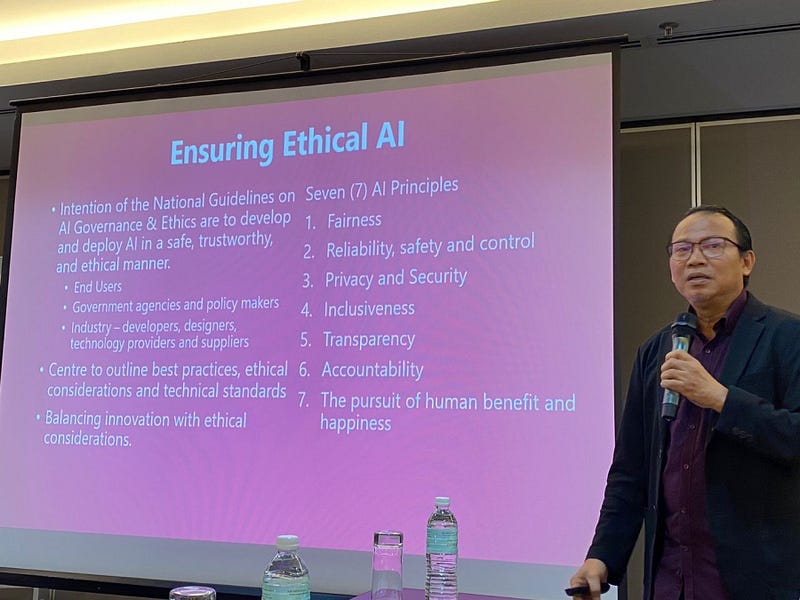

Transparency principles — whether they involve disclosing AI’s role in decision-making, clarifying its intended purpose, or allowing for challenges — are essential to building trust in AI systems.

This is the only way to ensure AI systems benefit everyone fairly and responsibly.